This project explores how we take complex information and simplify it into something digestible for interaction.

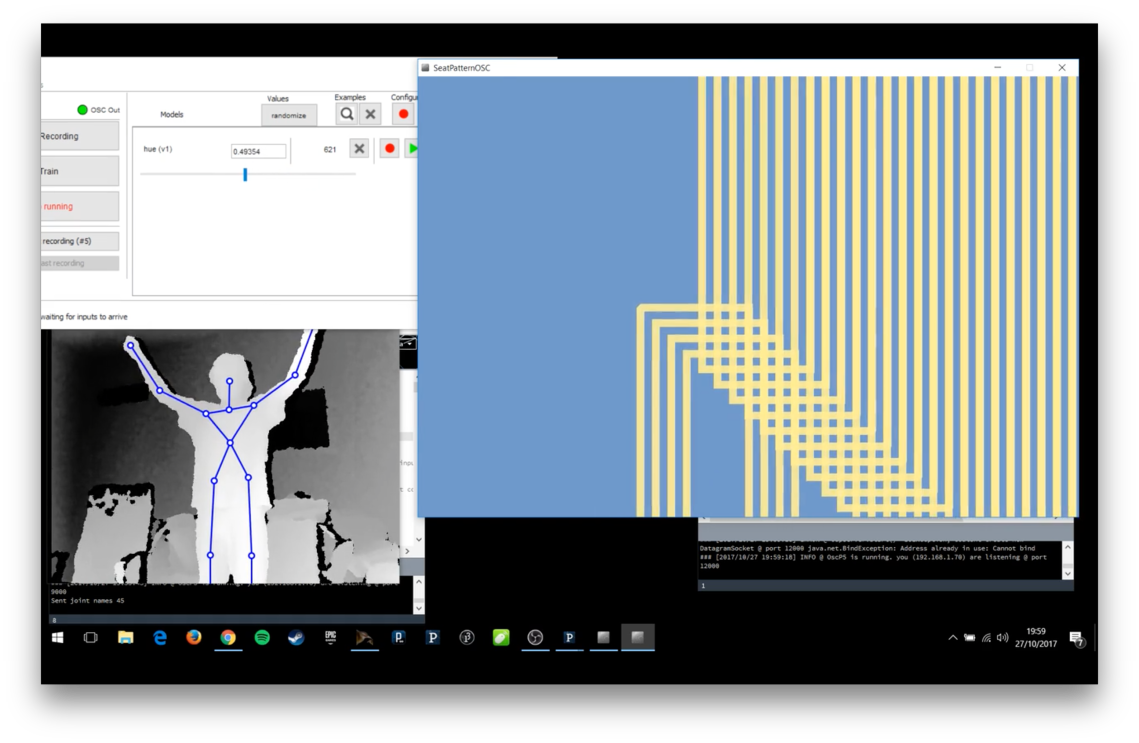

Students used Wekinator, a machine learning software, to explore chosen interactions. They developed a range of responses utilising web cameras, Kinect motion trackers and facial recognition software to translate complex data streams into poetic, visual and auditory outputs.

Mara Childs – Sound Colour Rain

Paul Lersveen